RNA-seq produces millions of sequences from complex RNA samples. With this powerful approach, you can:

- Measure gene expression.

- Discover and annotate complete transcripts.

- Characterize alternative splicing and polyadenylation.

The RNA-seqlopedia provides an overview of RNA-seq and of the choices necessary to carry out a successful RNA-seq experiment.

0. RNA-Seq workflow

Copy this link to clipboard

The purpose of this site is to provide a comprehensive discussion of each of the steps that are involved in performing RNA-seq, and to highlight the primary options that are available along with some guidance for choosing between various options. The primary content is topically organized according to the work-flow of a typical RNA-seq experiment.

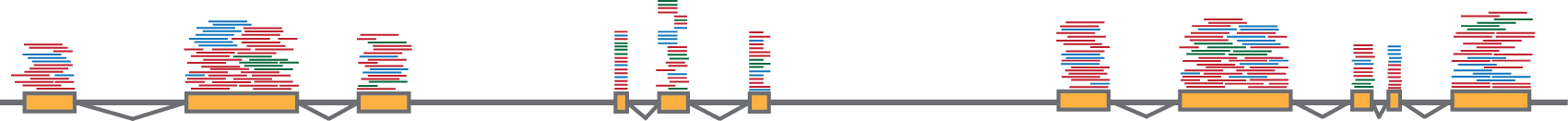

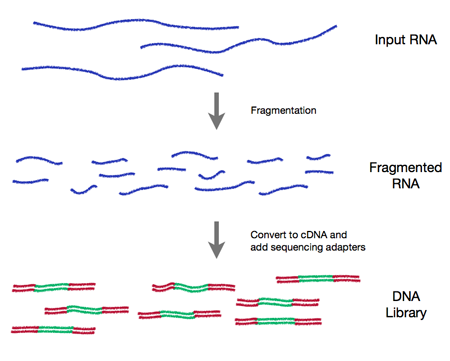

In all cases an RNA-seq experiment involves making a collection of cDNA fragments which are flanked by specific constant sequences (known as adapters) that are necessary for sequencing (see Figure 0.1). This collection (referred to as a library) is then sequenced using short-read sequencing which produces millions of short sequence reads that correspond to individual cDNA fragments.

A typical RNA-seq experiment consists of the following steps:

-

Design ExperimentSet up the experiment to address your questions.

-

RNA PreparationIsolate and purify input RNA.

-

Prepare LibrariesConvert the RNA to cDNA; add sequencing adapters.

-

SequenceSequence cDNAs using a sequencing platform.

-

AnalysisAnalyze the resulting short-read sequences.

Each of the five chapters below are dedicated to one of these steps.

Figure 0.1 RNA-Seq workflow

1. Experimental Design

Copy this link to clipboard

-

Design ExperimentSet up the experiment to address your questions.

-

RNA PreparationIsolate and purify input RNA.

-

Prepare LibrariesConvert the RNA to cDNA; add sequencing adapters.

-

SequenceSequence cDNAs using a sequencing platform.

-

AnalysisAnalyze the resulting short-read sequences.

1.1 Overview

Copy this link to clipboard

When designing an RNA-seq experiment researchers are faced with choosing between many experimental options, and decisions must be made at each step of the process. In some cases the choice one makes will have little impact on the quality of the experimental data. However, in other cases inappropriate decisions can result in spending a great deal of time and money only to obtain nearly useless data. It is therefore imperative that one carefully considers these choices before launching into a time consuming and costly RNA-seq experiment.

This stage of the experiment is arguably the most important but often receives the least amount of attention. Before one even begins an RNA-seq experiment they should have an understanding of each step and should put together a carefully devised experimental plan.

1.2 Identify the primary experimental objective.

Copy this link to clipboard

The types of information that can be gained from RNA-seq can be divided into two broad categories: qualitative and quantitative.

- Qualitative data includes identifying expressed transcripts, and identifying exon/intron boundaries, transcriptional start sites (TSS), and poly-A sites. Here, we will refer to this type of information as "annotation".

- Quantitative data includes measuring differences in expression, alternative splicing, alternative TSS, and alternative polyadenylation between two or more treatments or groups. Here we focus specifically on experiments to measure differential gene expression (DGE).

These two types of data are related and to some degree are inseparable. For instance, high quality annotation can lead to greater accuracy in mapping which can lead to higher quality measures of gene expression. Similarly, accurate annotation requires some degree of quantification. For instance, the simple existence of one or two reads that map to an intergenic region is not strong evidence for the existence of a gene at that locus.

It is important to realize that experiments that are designed to measure quantitative changes have requirements that differ from those that are designed for annotation, and it would be difficult and expensive to design a single experiment that satisfies the requirements of both. It is therefore important to first identify the primary goal of an RNA-seq experiment so that the experiment can be designed to maximize the objective. Below, we highlight some of the important distinctions between these two objectives with regards to RNA-seq. Further guidelines can be found in Table 1.1.

Table 1.1 Recommendations for RNA-seq options based upon experimental objectives.

| Criteria | Annotation | Differential Gene Expression |

| Biological replicates | Not necessary but can be useful | Essential |

| Coverage across the transcript | Important for de Novo transcript assembly and identifying transcriptional isoforms | Not as important; however the only reads that can be used are those that are uniquely mappable. |

| Depth of sequencing | High enough to maximize coverage of rare transcripts and transcriptional isoforms | High enough to infer accurrate statistics |

| Role of sequencing depth | Obtain reads that overlap along the length of the transcript | Get enough counts of each transcript such that statistical inferences can be made |

| DSN | Useful for removing abundant transcripts so that more reads come from rarer transcripts | Not recommended since it can skew counts |

| Stranded library prep | Important for de Novo transcript assembly and identifying true anti-sense trancripts | Not generally required especially if there is a reference genome |

| Long reads (>80 bp) | Important for de Novo transcript assembly and identifying transcriptional isoforms | Not generally required especially if there is a reference genome |

| Paired-end reads | Important for de Novo transcript assembly and identifying transcriptional isoforms | Not important |

1.3 Annotation

Copy this link to clipboard

The goal of annotation is to identify genes and genic architecture by analyzing short-sequence reads derived from expressed RNA. Thus, the most important parameter is that the sequence reads evenly cover each transcript, including both ends.

Coverage is primarily a function of the method used to prepare the library. Each library prep methods suffer from specific types of biases. For instance, oligo-dT priming for first-strand synthesis can be used to accurately annotate 3′-ends but often fails to evenly cover the 5′-end. Random priming suffers from uneven coverage due to sequence/structure priming biases, and can result in poor coverage of either of the ends. Some library preparation methods have been specifically designed to provide even transcript coverage, such as the SMARTer system from Clontech. Currently few publications have been devoted to evaluating the coverage and biases of different library prep methods; however, in one study (Levin et al 2010) it was observed that the dUTP-stranded method gave the best overall performance.

Regardless of the method used to prepare the libraries, each method still results in uneven coverage of individual transcripts. In order to get sequence reads that span the entire transcript more reads are required (i.e. deeper sequencing). Since the primary goal of annotation is to characterize what is present rather than how much of it is present annotation experiments can greatly benefit from enrichment procedures such as DSN normalization which reduces the proportion of reads that come from highly expressed RNAs, effectively increasing the reads that come from rarer transcripts without requiring deeper sequencing.

Two of the biggest challenges related to using short-read sequencing for annotation are that the sequence reads are much shorter than the biological transcripts and that in many cases a reference genome is unavailable. Both of these issues create problems for mapping and de novo transcript assembly. These problems can be partially alleviated by using sequencing strategies that produce longer sequence reads, paired-end reads, and stranded library preparation methods. Third generation sequencing platforms, such as PacBio, have much longer reads, which also can overcome these challenges, albeit at a greater cost than short-read sequencing.

1.4 Differential gene expression (DGE)

Copy this link to clipboard

The primary goal of DGE is to quantitatively measure differences in the levels of transcripts between two or more treatments or groups. At one level this is as simple as comparing the counts for reads that come from each transcript. However, simple read counts alone are neither sufficient for identifying differentially expressed genes nor for quantifying the degree of differences. This is largely because there is no way to know how accurately such counts reflect what was actually present in the original sample, and there is no indication of how widely expression normally varies in a given group or without treatment. Therefore in order to appropriately interpret differences in read counts one must also obtain information regarding the variances that are associated with these numbers. Several types of variances contribute to RNA-seq data including:

- Sampling variance: Even though a sequencing run is capable of producing millions of reads, these represent only a small fraction of the nucleic acid that is actually present in the library. There is therefore a built in sampling variance.

- Technical variance: Library preparation and sequencing procedures involve a series of complex chemical reactions which all contribute to between sample variance.

- Biological variance: Ultimately we are interested in measuring differences between different biological systems. Biological systems are inherently complex and very sensitive to perturbations. Thus, even in the absence of sampling and technical variance biological variance will always exist. Specifically the variance we are referring to is the nascent variance that is present within a treatment or control group.

DGE experiments must be designed to accurately measure both the counts of each transcript and the variances that are associated with those numbers. Experiments that are designed to annotate transcripts need to focus on getting even coverage across transcripts. However, in the case of DGE transcript abundance is typically measured by counting reads that map uniquely to that transcript, and even coverage along the length of the transcript is not strictly required. Similarly, long reads, paired-end reads, and stranded library preparation methods are not as important for DGE especially if a reference genome is available. Instead DGE experiments need to focus time and expense on replicates in order to obtain accurate measures of variances.

1.4.1 Replication and DGE

Copy this link to clipboard

Decisions about the number and types of replicates (individual units of statistical inference) are driven by both extrinsic and intrinsic factors. Extrinsic factors include cost, availability of samples, and feasibility of experiments. Intrinsic factors are often more difficult to grasp without prior information about the system and definitely more ambiguous from a decision standpoint. Intrinsic considerations include the degree of transcriptional variability among samples, whether certain genes or transcripts are of special interest, whether these genes of interest are expressed at low levels relative to other members of the transcriptome, and how many experimental factors are of interest (i.e. the complexity of an experimental design). Because the extrinsic considerations are often (but not always) "hard and fast", we find it useful to start from those constraints and subsequently build the study design around them. If, for example, you can really only afford to spend $X on a given experiment, it will become clear how much money you have to spend on the sequencing itself (the largest material cost). Given the sequencing throughput, you will be able to estimate how many individual samples can be sequenced at a given depth, and therefore how many replicates will be available for a range of possible experimental configurations.

1.4.2 Technical vs. Biological Replication

Copy this link to clipboard

Technical replication refers to the sequencing of multiple libraries derived from the same biological sample. Non-biological variation in estimated transcript abundance across these samples can arise from unintended differences during library preparation or sequencing. This variation is distinct from biological variation among individuals as well as variation due to the sampling process itself. One way to exploit technical variation would be to sequence a number of technical replicates from one library preparation technique and compare them statistically to additional technical replicates from another library preparation method. In this case inferences about differences in estimated transcript abundance owing to library preparation method could be drawn. In many cases, however, biologists are interested in using a single technical approach to understand biological variation among different individuals or tissues, usually with respect to experimental treatments. When biological variation is large relative to technical variation (Bullard et al. 2010) the rewards in statistical power due to additional biological replicates will surpass the improved parameterization of technical variation garnered from additional technical replicates, but technical variation does become a problem for transcripts with shallow coverage (McIntyre et al. 2011).

Unless you are genuinely interested in comparing technical aspects of RNA-seq, or you expect technical variation to be especially great for a large majority of the target transcripts, we recommend greater resource allocation to biological replication. This isn't to say technical replicates should never be sequenced, but simply that limited financial resources are probably better spent on more biological, relative to more technical, replicates in many cases.

1.4.3 How many replicates should be sequenced?

Copy this link to clipboard

As with any experiment that is intended to test a null hypothesis of no difference between or among groups of individuals, differential expression studies using RNA-seq data need to be replicated in order to estimate within- and among-group variation. We understand that constraints in some study systems make replication very difficult, but it really is important.

Statistical hypothesis tests are prone to two types of error. Failure to reject the null hypothesis of no difference when there actually is a difference (a "false negative") is known as type II error, and β is used to symbolize the probability of its occurrence. The number of replicates per group in an experiment directly affects type II error, and therefore "statistical power" (which is 1-β). Power also depends on the magnitude of the effect of one condition relative to another on the variable of interest, which is in part determined by the degree of variation among individuals. Thirdly, power depends on the acceptable maximum probability of type I error (the event in which the null hypothesis is rejected in favor of the alternative when the null hypothesis is actually true). Experimenters conventionally tolerate the risk of type I error (symbolized by α) if it is less than 0.05 for a given test. When performing many hypothesis tests (as is the case for differential expression tests across thousands of transcripts), type I error must be considered in light of the many tests. For example, if one performs 20,000 tests of differential expression with an "acceptable" type I error probability of < 0.05, 1,000 rejections ofnull hypotheses are expected due to chance alone. So if you identify 2,000 differentially expressed genes under this scenario, half of these are likely false positives! Given this problem it is common practice to instead identify a group of genes for which the expected "false discovery rate" is a sufficiently low value (e.g. 0.1), given the distribution of p-values across all of the many hypothesis tests. For a more detailed description of multiple hypothesis testing for highly dimensional data, we recommend an excellent review (Noble 2009). For statistical approaches commonly used to control the false discovery rate in genomic data sets, see (Benjamini, Hochberg 1995) and (Storey 2002).

In order to estimate how many replicates to sequence for a given hypothesis test to achieve a desired level of power, you need to have at least a crude handle on treatment effect size, which depends on variance in read counts across individuals within each treatment. Note that effect sizes are easier to reliably estimate for genes with at least moderate sequencing coverage in one treatment than for genes with sparse coverage in all treatments (e.g. < 10 reads per individual), so sequencing depth for each transcript needs to be considered. Finally, because power is also a function of α, it is useful to have an estimate of the acceptable p-value threshold which has been adjusted, of course, to reflect an acceptable false discovery rate. This parameter depends on the number hypothesis tests and the distribution of original p-values across those tests.

While it is impossible for anyone to say with complete accuracy how many replicates are necessary for any given experimental objective, there are a few key guidelines relevant to the issues described above that will at least help you avoid conducting a completely ineffective study. The major considerations that dictate these guidelines are listed below. Additional relevant information may be found in the Analysis section in this website.

1.4.4 Depth of Sequencing

Copy this link to clipboard

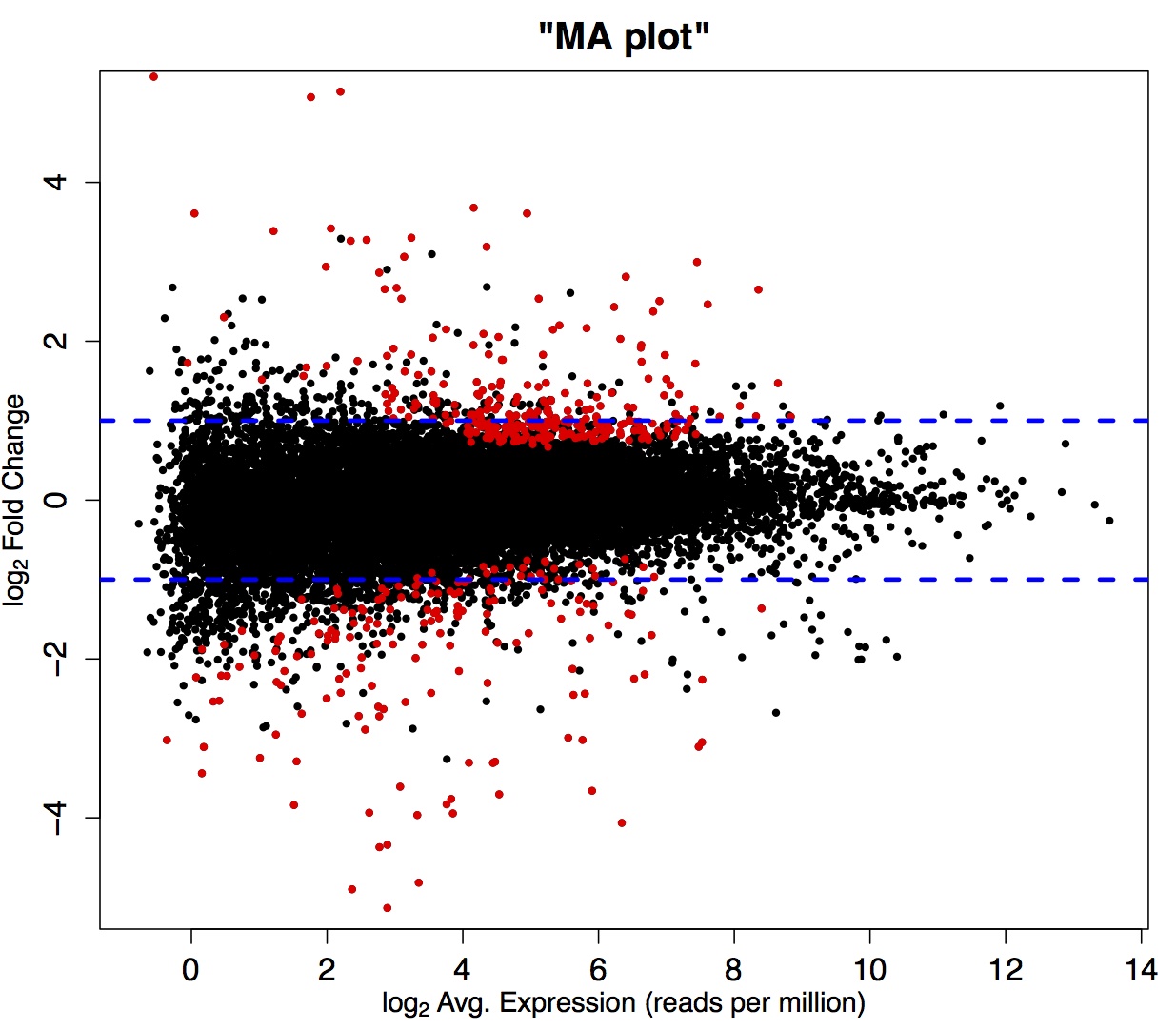

As mentioned previously, variation due to the sampling process makes an especially large contribution to the total variance among individuals for transcripts represented by few reads. This means that identifying a treatment effect on genes with shallow coverage is not likely amidst the high sampling noise (see Figure 1.1).

A study of chicken RNA-seq data revealed that expression estimate correspondence among technical replicates (no biological variation) for genes with above-median coverage stabilized at about 10 million reads per sample (Wang et al. 2011). It therefore stands to reason that 10 million reads per sample is a benchmark from which to start, although this is by no means a hard and fast rule for all data set types.

Figure 1.1

1.4.5 Experimental Complexity

Copy this link to clipboard

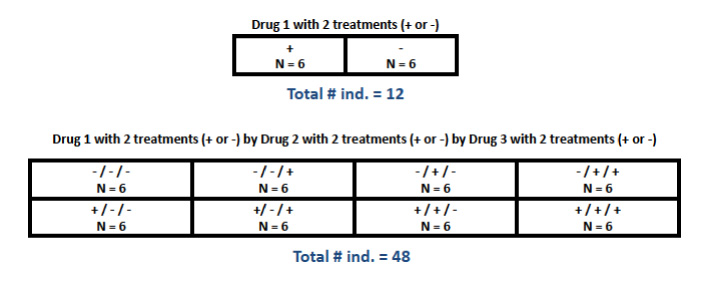

A comparison between two groups (e.g. one "experimental" and one "control") is the simplest type of differential expression study design, and probably a fairly common one. For more complex designs, however, it is important to remember that sufficient replication has to occur at every level of comparison. In a fully "factorial" design, for example, more than one experimental "factor" is of interest, each with two or more treatments, such that any individual receives one of multiple possible treatments at each factor. In this case, every possible combination of treatments across factors needs to be replicated sufficiently, which results in a much larger total sample size (see Figure 1.2). Many more complicated design classes are possible. A formal, comprehensive treatment of experimental design is beyond the scope of this website, so please consult an appropriate biometry text, Sokal and Rohlf's Biometry is one very suitable reference.

Figure 1.2

1.4.6 Target Transcript Properties

Copy this link to clipboard

The nature of the individual transcripts in which one is most interested will undoubtedly dictate the success of an RNA-seq study. For instance, if you have no expectations for differential expression and simply want a preliminary list of candidates that are grossly differentially expressed, you are likely to reach this goal. If, on the other hand, you are interested in testing whether very specific sets of transcripts are different in abundance among treatments, and you have reason to believe they might be expressed at low levels, you may not reach sufficient coverage (even with tens of millions of reads per sample) to detect a real difference. In general, detecting all real differences in expression across the entire transcriptome is not a realistic expectation given the statistical issues addressed above.

1.4.7 Treatment Effect Size

Copy this link to clipboard

As mentioned above, the effect size will to some extent be affected by sampling variance, and therefore by depth of coverage. There are also clear biological reasons to expect differences in effect size across transcripts or specific types of samples. Some treatments will simply affect transcriptional targets more directly than others, so large differences between treatments for these targets are expected. Some transcriptional responses to a treatment may be more variable among individuals, owing to greater genetic variation at the regions of the genome responsible for mediating the response, which will ultimately manifest as a smaller effect size. In some cases very large, extensive transcriptional differences are expected across the board. If, for example, one is comparing transcript abundance between two different tissue types, many large differences at the level of read counts are expected.

1.5 No Substitute for "Pilot" Data

Copy this link to clipboard

You have probably noticed that these key considerations (especially target transcript attributes and effect sizes) seem likely to vary widely on a case-by-case basis. Factors like the nature of the treatment(s), the type of sample, or the ability to control the environment of subjects, for example, really differ from experiment to experiment. This means that published data from some other experimental system, or even naïvely simulated data, may not be informative enough for critical decisions up front. In light of this conundrum, our single best recommendation is to first sequence a subset of replicates from a larger experiment, get a handle on coverage levels across genes and effect sizes of treatments, and then use this information to estimate the level of replication necessary to reach a given level of statistical power for a given fraction of the transcriptome. One strategy, provided the experimental setup is not too costly, might be to run the desired experiment with a substantial level of replication (e.g. 10-20 individuals per treatment or combination of treatments), but only actually sequence 3 or 4 samples at moderate to high coverage (e.g. 10 million reads per individual) for each treatment level. This way you would have enough preliminary information to conduct a reliable power analysis, but still have plenty of remaining samples "in the bank" for additional sequencing.

Fortunately, the Marth Lab at Boston College has produced a handy, flexible tool for estimating power of RNA-seq study designs, given a suite of user-supplied parameters. This tool, called "Scotty" (Busby et al. 2013), is especially effective when fed user-supplied pilot data, and will output a range of sample size/coverage configurations acceptable for specified power and cost constraints. The tool may be found here. Although this tool is currently designed to accommodate a simple two-treatment experimental design, the framework is in principle extendable to more complicated designs.

2. RNA Preparation

Copy this link to clipboard

-

Design ExperimentSet up the experiment to address your questions.

-

RNA PreparationIsolate and purify input RNA.

-

Prepare LibrariesConvert the RNA to cDNA; add sequencing adapters.

-

SequenceSequence cDNAs using a sequencing platform.

-

AnalysisAnalyze the resulting short-read sequences.

2.1 Overview

Copy this link to clipboard

Since the goal of RNA-seq is to characterize the transcriptome the first step naturally involves isolating and purifying cellular RNAs. Isolation and purification of RNA typically involves disrupting cells in the presence of detergents and chaotropic agents. Depending upon the starting material mechanical disruption is also recommended. After homogenization, RNA can be recovered and purified from the total cell lysate using either liquid-liquid partitioning or solid-phase extraction. Typically the total RNA is then enriched for messenger RNA (mRNA). This can be done by either directly selecting mRNA or by selectively removing ribosomal RNA (rRNA). To make the RNA suitable for RNA-seq it is typically fragmented and then the quality and fragmentation are assessed.

2.2 Working with RNA

Copy this link to clipboard

The success of RNA-seq experiments is highly dependent upon recovering pure and intact RNA. Because RNA is more labile than DNA and RNases are very stable enzymes extra care should be taken when purifying and working with RNA. Some general considerations are:

Precautions when working with RNA

- Maintain separate reagents and consumables for RNA extraction. Avoid co-storage with reagent kits that include RNases.

- Process the samples quickly and keep the RNA on ice when possible.

- Wear gloves and work in a clean workspace.

- Use RNase free water. Water from commercial purifiers is generally RNase-free (as long as the purifier and dispensers are kept thoroughly clean). To ensure that water is RNase-free it can be treated with diethyl pyrocarbonate (DEPC) as follows. Add DEPC to 0.05% and incubate overnight at room temperature. The DEPC is then destroyed by autoclaving for 30 minutes.

- Glassware can be heated at 250 °C for several hours to remove RNase contamination.

- Plastic-ware can be soaked in 0.1 N NaOH/1mM EDTA then rinsed thoroughly with RNase free water.

Other information about working with RNA can be found at: Working with RNA (Ambion).

2.3 Stabilize RNA

Copy this link to clipboard

The overall goal of RNA-seq is to characterize the transcriptome at the moment the cells were harvested; thus care should be taken to limit degradation of RNAs.

More on RNA stabilization

RNA is much more labile than DNA, and the moment a tissue sample is dissected, for example, the cells begin to die and the RNA begins to be degraded. Since there is often a time delay between harvesting and isolation of the RNA samples should be frozen in liquid nitrogen immediately upon harvest. In addition several reagents have been developed to preserve the RNA in fresh tissue. Two of the more commonly used reagents are RNAstable® (from Biomatrica) and RNAlater® (from Qiagen). Carefully follow instructions in the manuals for stabilization reagents. Qiagen also sells a reagent (RNAprotect Bacteria Reagent) that is specifically formulated for bacteria. Even if the samples can be frozen immediately it is recommended that an RNA stabilizer is added before freezing since the moment the tissues begin to thaw in the subsequent extraction the RNAs are subject to degradation.

2.4 Isolate and purify RNA

Copy this link to clipboard

Two of the primary challenges of the isolation procedure are (1) to separate the RNA from other cellular materials and (2) to preserve the integrity of the RNA that is extracted. This is complicated by the fact that DNA and RNA are chemically very similar to each other and that RNases are notoriously stable proteins that can still be active under conditions that denature other proteins.

2.4.1 Solubilization

Copy this link to clipboard

Virtually all RNA extraction procedures rely upon disrupting tissues in an aqueous solution containing organic buffers, guanidinium salts, and ionic detergents.

More about RNA solubilization

The buffers that are most commonly used include TRIS and sodium-acetate, which are added to adjust and maintain the pH. Chaotropic agents (compounds that disrupt both hydrophobic and hydrogen-bond interactions) are added to denature proteins. The most commonly used chaotropic agents are guanidinium salts. Guanidinium is a strong protein denaturant capable of denaturing recalcitrant proteins such as RNases. The benefits of using guanidinium for purification of RNA were first reported in 1951 (Volkin and Carter 1951) and since that time virtually all RNA extraction procedures have incorporated the use of high concentrations (4-6M) of guanidinium thiocyanate or guanidinium hydrochloride. Ionic detergents are added to help solubilize cell membranes and lipids. The most commonly used detergents include sodium dodecyl sulfate (SDS), sodium lauroyl sarcosinate (sarkosyl), sodium deoxycholate, and cetyltrimethylammonium bromide (CTAB).

2.4.2 Mechanical Homogenization

Copy this link to clipboard

Incomplete homogenization can significantly reduce RNA yields.

Different types of samples require different methods to completely disrupt cells.

More about tissue homogenization

Despite the presence of detergent and chaotropic agents it is necessary to also incorporate some form of mechanical homogenization to completely disrupt cell walls, plasma membranes, and organelles. For some samples, such as mammalian cell cultures, addition of chaotropic agents is enough to lyse the cells. However, mechanical homogenization is still recommended to shear the DNA and assist in the solubilization of organelles. For cell cultures the homogenization can be as simple as rapidly pipetting the sample or passing it through a syringe needle several times. Other tissues require more extreme mechanical processing.

Mechanical methods for homogenizing tissues include using cryo-grinding with a mortar/pestle, shearing using a rotor-stator homogenizer or a Dounce homogenizer, sonication, or bead-beating. The method that one should choose is highly dependent upon the starting material. The following links provide useful information for choosing appropriate homogenizers.

Tissue specific considerations

Isolation of RNA from muscle and skin tissues can be difficult due to the presence of connective tissue, collagen, and contractile proteins. For these tissues proteinase K can be added to degrade the problematic proteins. Proteinase K is an especially robust enzyme that retains activity under conditions that denature most other proteins. For these samples it is generally advised to first use mechanical homogenization in high concentrations of guanidinium. Then the homogenate should be diluted to decrease the guanidinium concentrations to ~2M before adding the proteinase K. However, researchers should be aware this dilution step can affect the downstream purification and in all cases they should follow protocols that have been developed specifically for these tissues.

Tissues high in fat:Homogenates from tissues that are high in fat (e.g., brain, adipose tissue, and plant seeds) should be extracted with chloroform to remove lipids.

2.4.3 Recovery of RNA from lysate

Copy this link to clipboard

After homogenization two methods are commonly used to recover RNA from the cell lysate. (1) by extraction with organic solvents or (2) by solid-phase extraction on silica.

2.4.3.1 Organic extraction

Copy this link to clipboard

One of the oldest methods for recovering RNA from the lysate involves using a mixture of acidified phenol/chloroform/isoamyl alcohol.

More on organic extraction

Use of phenol for recovering RNA was first reported in 1956 (Kirby 1956), and in 1987 Chomczynski and Sacchi presented the method that is still used today (Chomczynski and Sacchi 1987; Chomczynski and Sacchi 2006). Acidified phenol/chloroform/isoamyl alcohol is added to the cellular homogenate, mixed to make an emulsion and then the organic and aqueous phases are separated by centrifugation. Generally a third phase (the interphase) also forms between the lower organic and upper aqueous phases. The aqueous phase contains the RNA while denatured proteins and lipids preferentially partition into the interphase and organic phase. The aqueous phase can be recovered by pipet. Under acidic conditions (pH ~4.8) DNA preferentially partitions into the organic phase. It is important to realize that the pH is a critical factor in this technique since at higher pH DNA will end up in the aqueous layer and contaminate the RNA.

Many researchers choose to use one of several premixed solvents that are based on the Chomczynski protocol. Some of the commonly used products include: TRIzol (Life Technologies), TRIsure (Bioline), and PureZOL (BIO-RAD).

After pipetting off the aqueous layer the RNA is typically concentrated and desalted using ethanol or isopropanol precipitation.

Considerations when using phenol/chloroform partitioning

- One of the most common mistakes is to start with too much tissue, since the pH, and the concentrations of guanidinium, phenol, and chloroform are all critical factors.

- Care must be taken to ensure that none of the interphase or organic phase is withdrawn during removal of the aqueous layer. It is better to sacrifice some of the aqueous layer than to try to recover all of it. If it is very important to recover all of the RNA then it is better to recover a safe amount of the initial aqueous layer then add more extraction buffer and perform a second extraction. The two aqueous layers can then be combined and ethanol precipitated.

- After the phenol/chloroform extraction it is often beneficial to perform a final extraction using just chloroform to remove traces of phenol, which can inhibit downstream reactions.

2.4.3.2 Solid-phase extraction

Copy this link to clipboard

An alternate procedure for separating RNA from other cellular macromolecules involves using solid-phase extraction onto silica. The RNA is bound onto the surface of silica fibers directly out of cellular lysate, washed of contaminants while bound, and then eluted back into solution.

More on solid-phase extraction

In the 1970's it was demonstrated that nucleic acids in the presence of high concentrations of chaotropic agents would bind to silica. Since nucleic acids and silica are both negatively charged the interaction is not simply due to charge-charge interactions. Instead, binding is thought to be due to the formation of a cationic salt bridge, which is promoted by chaotropic compounds. This phenomenon formed the basis of procedures designed to recover and purify nucleic acids from cell lysates.

When using this technique to purify RNA the guanidinium serves to both denature proteins and promote binding to the silica. Tissues are homogenized in the presence of guanidinium first then alcohol (typically ethanol or isopropanol) is added before the lysate is applied to a small column containing silica. It is important to realize that the final concentrations of guanidinium and alcohol are crucial for success and in all cases researchers should carefully follow established protocols. Centrifugation or a vacuum is then used to force the lysate through the column after which it is washed using salt solutions that do not disrupt the nucleic acid: silica interaction but remove proteins and other cellular debris. After washing, the nucleic acid is eluted from the column using a low ionic strength buffer. Since the bound RNA can be eluted using very small amounts of elution buffer, concentration by ethanol precipitation is not generally needed before proceeding to downstream procedures. Before the advent of commercially available kits researchers built their own columns using diatoms or crushed glass. However, numerous kits are now commercially available and these are now the method of choice. Several of the most commonly used kits are available from Qiagen, Promega, Agilent Technologies, and Ambion|Life Technologies.

Solid-phase extraction vs. organic extraction

Both techniques are commonly used. However each has certain advantages and disadvantages. If appropriate care is taken both techniques are capable of producing highly purified RNA.

- Organic extraction is cheaper and is easier to scale up for larger amounts of starting material.

- Silica columns are more expensive but are easier to use and are more amenable for processing multiple samples in parallel.

- Solid-phase extraction has the advantage that the RNA can be eluted using very small amounts of elution buffer, which obviates the need to perform an ethanol precipitation step.

In practice many protocols actually combine both procedures. In this case, after adding appropriate amounts of alcohol to the aqueous phase from the phenol/chloroform extraction, it is further purified using a silica column.

Small RNAs

It should be noted that protocols for isolating mRNA are not generally optimized for isolating small RNAs such as siRNA, piwiRNA, and miRNAs. If the researcher is interested in purifying these RNAs then they must be sure to use methods that have been specifically designed for small RNAs.

Removing genomic DNA contaminants

Generally, if one carefully follows established protocols very little genomic DNA is carried over into the final total RNA sample. However, it is sometimes necessary to digest DNA using DNase I.

Another useful online guideline for RNA preparation from Ambion can be found here.

2.4.4 Quantitation and Quality Assessment

Copy this link to clipboard

The purity and/or yield of the RNA can be measured using a spectrophotometer, fluorometer or Bioanalyzer/Fragment Analyzer. And the quality of a total RNA prep can be assessed for signs of degradation by running a portion on an agarose or acrylamide gel or by using an instrument such as the Agilent Bioanalyzer.

2.4.4.1 Assessing RNA quality

Copy this link to clipboard

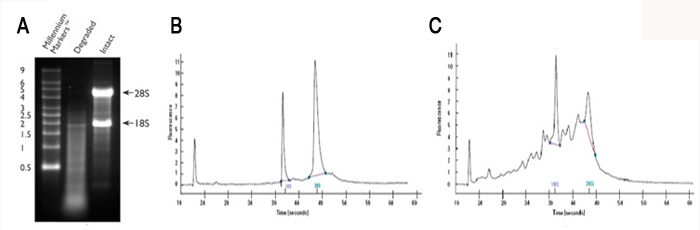

Agarose and acrylamide gels are commonly used, since most labs have the necessary equipment; however, compared to the Bioanalyzer relatively large amounts of RNA are required. Examples of good and poor quality RNA preps are shown in Figure 2.1A (agarose gel) and Figure 2.1B, 2.1C (Bioanalyzer trace).

Figure 2.1 Intact vs. degraded RNA.

2.4.4.2 Assessing RNA quantity

Copy this link to clipboard

The most commonly used methods for assessing the quantity of RNA include using: UV absorbance, fluorescence, and an Agilent Bioanalyzer. Each of these methods has strengths and weaknesses. We recommend fluorometry for quantification and the Bioanalyzer (or equivalent device) for quantification and RNA integrity evaluation. For a useful (albeit slightly dated) comparison of methods see Aranda et al. 2009.

A fluorometer (such as Life Technologies' Qubit) measures the concentration of RNA bound to a fluorescent dye. The method is not as subject to skewing by contaminants such salts, detergents, etc, as UV absorbance.

The concentration of RNA can also be estimated from a Bioanalyzer or Fragment Analyzer trace using the proprietary software. The accuracy of this method may be the least sensitive to contaminants such as DNA and phenol.

The oldest and perhaps most common way to quantitate RNA is by measuring the absorbance at 260 nm.

More on quantitation by UV absorbance

A 40 µg/ml solution of RNA in a cuvette with a 1 cm path length has an A260 = 1.0. To ensure an accurate reading the absorbance should be between 0.1 and 1.0.

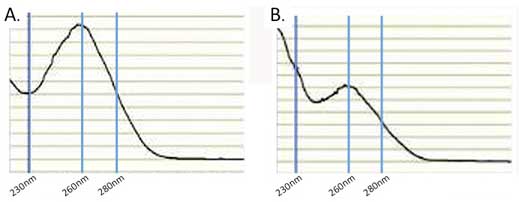

Large errors in the quantitation can be introduced by the presence of interfering compounds that also absorb at 260 nm. To help guard against this problem it is important to examine the absorbance profile from 220-300 nm. The profile should look similar to that in Figure 2.1A which is typical for clean nucleic acid.

If the profile looks nothing like this then there is a problem. Either there is no RNA or the RNA is contaminated with other UV absorbing compounds. Some of the most common contaminants that can interfere include DNA, phenol, guanidinium, and protein all of which also absorb at 260 nm. In addition RNA isolated from plants is often contaminated with compounds such as polysaccharides, flavones, and polyphenols, all of which can lead to erroneous absorbance measurements. A common recommendation is to evaluate the A260/A280 ratio. Pure RNA has an A260/A280 ratio of 2. If there is significant protein contamination this ratio will be less than 2. However, this method alone will not accurately catch guanidinium and phenol contamination. Both of these compounds strongly absorb at 230 nm so the A260/A230 ratio can be used to determine if they are present (see Figure 2.1B). Pure RNA as an A260/A230 ratio of 2 and a value less than this is a good indication that there are contaminants. Typically, UV absorbing contaminants can be removed by either cleaning the RNA on a silica spin-column or by using ethanol precipitation.

Absorbance measurements should always be made on RNA that is suspended in a buffered aqueous solution like TE buffer (10 mM TRIS, pH 8, 1 mM EDTA). The UV absorbance of RNA is not pH dependent but that of many common contaminants is. TE has little absorbance from 230-280 nm so it will not interfere with the assay; however it is always a good practice to blank the spectrophotometer with the RNA resuspension buffer.

Figure 2.2

2.5 Target enrichment

Copy this link to clipboard

It is often necessary to enrich specific classes of RNA in the sample to be sequenced. Total RNA recovered using the procedures described above consists of >80% ribosomal RNA (rRNA) (Raz et al. 2011), so if rRNA were not removed, the majority of the final sequence reads would be from rRNA.

The four methods that are commonly used to enrich specific classes of RNAs are:

- Selection of target RNAs via hybridization.

- Removal of non-target RNAs via hybridization.

- Copy-number normalization via duplex-specific nuclease digestion.

- Target enrichment via size-selection

2.5.1 Selection of mRNAs via hybridization to oligo-dT

Copy this link to clipboard

2.5.1.1 Fishing out mature mRNA by the tail

Copy this link to clipboard

This method uses oligo-dT to selectively recover mature mRNAs by duplexing with their poly-A tails. At the same time, the proportions of RNA classes that don't have long poly-A stretches will be reduced.

More about selection of mRNAs via oligo-dT hybridization

Several variants of this method have been developed; these include using columns containing oligo-dT bound to cellulose, using oligo-dT bound to plastic plates via a biotin linkage, and using magnetic beads to which oligo-dT has been attached via a biotin linkage. All of these approaches work well and numerous commercial kits are available.

Since this method only recovers poly-adenylated RNAs it is useful for characterizing the levels of mature mRNAs, but other RNAs such as immature mRNAs and poly-A- non-coding RNAs (ncRNAs) will be lost.

Some mitochondrial mRNAs are also poly-adenylated and will be enriched by oligo-dT.

Bacterial mRNAs are not poly-adenylated, and therefore cannot be enriched using oligo-dT based hybridization methods.

2.5.1.2 SuperSAGE enrichment for 3′ mRNA tags

Copy this link to clipboard

SuperSAGE, a high-throughput version of SAGE (serial analysis of gene expression), is an approach that targets for sequencing just the 3′-end of transcripts with a polyA tail. SuperSAGE is especially powerful for differential gene expression because sequencing coverage is focused on a short, 3′ region of each transcript and not lost on other regions. The limitation is that one needs a well-annotated genome or transcriptome against which to align the tags, which predominantly fall in 3′ UTRs. Furthermore, this approach does not capture alternative splicing effectively.

More on SuperSAGE

Biotinylated oligo-dTs that include an EcoP15I recognition site are hybridized to polyadenylated mRNAs in the sample, followed by first- and second-strand cDNA synthesis. The cDNA is then cut upstream of the polyA tail with a frequent-cutting restriction enzyme such as NlaIII, and pulled down with streptavidin-coated beads. At this point an adapter, which includes the platform-specific nucleotides necessary for high-throughput sequencing, another EcoP15I recognition site, and a NlaIII overhang is ligated to the bead-bound tags. EcoP15I digestion will then cut the cDNA at a distance 25-27 nt from the recognition sequence, and this portion of the tag is sequenced after ligation of another adapter with platform-specific nucleotides, and PCR amplification.

A recent review of the technique and platform-specific protocols are described in Matsumura et al. 2012.

2.5.2 Removal of ribosomal sequences via hybridization

Copy this link to clipboard

Several companies provide kits that can be used to selectively remove ribosomal RNA from total RNA samples. In contrast to polyA+ enrichment, this approach preserves non-polyadenylated RNAs allowing one to investigate broader classes of RNAs including immature mRNAs and non-polyadenylated ncRNAs.

This technique uses oligos that are complementary to highly conserved ribosomal RNA sequences to bind and remove the rRNA.

More on rRNA depletion by hybridization

The oligo/rRNA complex is removed from the solution via binding to beads. Different kits use different technologies to capture the bound complex. The capture oligos in the Ribominus (Invitrogen/Life Technologies) and Ribo-Zero (Epicentre/Illumina) kits have a biotin tag, that can be captured using streptavidin coated magnetic beads. The GeneRead kit (Qiagen) uses antibodies that specifically recognize the rRNA/oligo complex.

All of these kits are capable of removing the majority of rRNA from a total RNA sample. However, users that are working with non-model organisms should consult the manufacturer to verify that the capture oligos are compatible with the rRNA in their sample. In addition, since these kits rely upon a limited number of oligos they only work well if the input RNA is not degraded. It is therefore important that users verify the quality of their RNA before proceeding.

The mRNA-ONLY kit (Epicentre/Illumina) uses a 5′-phosphate dependent exonuclease to degrade RNAs (such as rRNA) that have a 5′-monophosphate. This exonuclease will not degrade intact and mature mRNAs that have a 5′-cap. However, the manufacturer does not recommend this kit for RNA-seq.

2.5.3 Copy-number normalization via duplex-specific nuclease digestion (DSN)

Copy this link to clipboard

The concentration of specific mRNAs varies dramatically within a cell. Some transcripts may be present at relatively high concentrations (>10,000 copies per cell) while for others there may be only a few copies. Therefore much deeper sequencing is required to interrogate the low abundance transcripts. DSN-normalization is a technique that partially normalizes the concentrations of each mRNA by selectively removing many of the most abundant transcripts, which effectively increases the relative concentration of the low abundance transcripts (Zhulidov 2004 and Zhulidov 2005). DSN can therefore be very useful for RNA-seq experiments that have annotative goals such as gene-discovery and characterization of transcript architectures (see for instance Ekblom et al. 2012).

More on DSN normalization

DSN-normalization is performed after preparation of cDNAs and prior to library cloning. After denaturation of ds cDNA flanked with known adapters, it is subjected to renaturation. During renaturation, abundant transcripts re-anneal more quickly than those that are less abundant. Two fractions are formed: a double-strand fraction of abundant cDNA and a normalized fraction composed of single-stranded cDNA. The double-stranded cDNA fraction is then degraded by a duplex-specific nuclease (DSN).

For additional information regarding DSN see also: evrogen and sequensys.

2.5.4 Target enrichment via size-selection

Copy this link to clipboard

This method is generally reserved for enrichment of small ncRNAs such as miRNA, siRNA, and piRNAs. Since these RNAs are much smaller than mRNA and rRNA they can be separated by electrophoresis of the total RNA through an agarose or acrylamide gel and then by cutting out the region that corresponds to the size of interest. Although effective, this method is laborious and recoveries can be low. Several companies now offer small RNA purification kits that are based on solid phase extraction.

2.6 RNA fragmentation

Copy this link to clipboard

Most current sequencing platforms are capable of providing only relatively short sequence reads (~40-400bp depending upon the platform). Therefore, most protocols incorporate a fragmentation step to improve sequence coverage over the transcriptome. However, protocols differ as to when the fragmentation is performed. Most of the original protocols fragmented cDNA; however, fragmentation of the RNA (before converting it to cDNA) is becoming more popular. Four different methods are commonly used to fragment RNA: enzymatic, metal ion, heat, and sonication.

Although all of these methods are commonly used to fragment RNA, little information has been published comparing their relative performance. The goal is to produce a population of RNA fragments that are on average about 200 bp. The effectiveness of the fragmentation reaction should be assessed by evaluating the RNA on a gel or by using an Agilent Bioanalyzer. An example of fragmented RNA can be seen in Figure 2.3. This example and guidelines for fragmenting RNA can be found at the U. of Texas at Austin FGRS site.

More on mechanisms of fragmentation

Enzymatic

Enzymatic fragmentation is generally performed using E. coli RNase III. RNase III randomly cleaves double-stranded portions of RNA and leaves a 5′-phosphate and a 3′-hydroxyl.

Metal ionChemically induced fragmentation uses metal ions that act as Brönsted bases to abstract a proton from the 2′-OH groups of the riboses (Forconi and Herschlag 2009). This generates a 2′-O- group that attacks the phosphorous atom, resulting in departure of the 5′-OH group. This reaction results in 5′-OH and 3′-phosphates. The kinetics for this reaction are much faster for single-stranded regions of RNA. Most metal ions are capable of fragmenting RNA with differing levels of efficiency. Lanthanide ions (e.g. Eu3+, Tb3+, and Yb3+) are particularly efficient. However, the ions that are most commonly used are Zn2+ and Mg2+. Fragmentation is performed at high temperatures (70-90 °C) and slightly alkaline pH (pH 8-9). The reaction can be easily stopped by the addition of a metal chelator such as EDTA.

HeatRNA can also be fragmented by heating at 95 °C in water for 30 minutes.

SonicationRNA can be fragmented using sonication. Sonication uses high intensity sound waves to produce micro-bubbles that upon collapse release large amounts of energy capable of breaking ribonucleic-acid bonds.

Figure 2.3

3. Library Preparation

Copy this link to clipboard

-

Design ExperimentSet up the experiment to address your questions.

-

RNA PreparationIsolate and purify input RNA.

-

Prepare LibrariesConvert the RNA to cDNA; add sequencing adapters.

-

SequenceSequence cDNAs using a sequencing platform.

-

AnalysisAnalyze the resulting short-read sequences.

3.1 Overview

Copy this link to clipboard

After obtaining an RNA preparation that is suitable for RNA-seq the RNA must be converted to double-stranded complementary DNA (cDNA). Currently available sequencing technologies require a DNA template with platform-specific "adaptor" sequences at either end of each molecule. Generating the cDNA, adding the adaptors, and amplifying the DNA for sequencing round out the process of "library preparation". Competing RNA-seq procedures diverge during library preparation, and the protocol details are highly dependent upon which strategy and sequencing platform is used.

3.2 First-strand synthesis

Copy this link to clipboard

In order to convert RNA to DNA the RNA must be used as a template for DNA polymerase. Most DNA polymerases cannot use RNA as a template. However, retroviruses encode a unique type of polymerase known as reverse transcriptases, which are able to synthesize DNA using an RNA template.

All of the current protocols utilize the ability of reverse transcriptase (RT) to synthesize a DNA strand using RNA as a template. RT, like other polymerases, requires a primer annealed to either DNA or RNA to initiate polymerization. Several first-strand priming options are used. These include the following:

- Using oligo-dT to prime off of the poly-A tail of mature mRNA.

- Using random primers to prime at 'random' positions along the RNA molecule.

- Priming off of oligos that are ligated onto the ends of the RNA.

Each of these priming options has strengths and weaknesses which are discussed more fully below.

More on first-strand synthesis

RTs, like other polymerases, can only initiate synthesis from a primer with a 3′-OH that is annealed to the template strand. Several first-strand priming options are used for RNA-seq. Priming options can be divided into two major categories (1) priming off of an oligo ligated to the 3′-end of the RNA template, or (2) using primers with random sequences that can direct synthesis at random locations throughout the RNA template. The most commonly used priming methods are discussed more fully below.

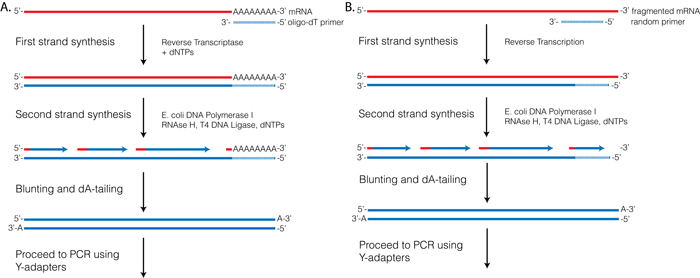

Figure 3.1

3.2.1 First-strand priming using oligo-dT

Copy this link to clipboard

One of the oldest methods for first-strand priming involves using oligo-dT to prime synthesis off of the poly-A tail that is found on most mature eukaryotic mRNAs. This method has the advantage that since the priming sequence is the same for all mRNAs then they should all be equally primed regardless of their coding sequence. However, this approach has several distinct disadvantages. The first is that non-polyadenylated RNAs are lost. If one is only interested in characterizing the expression of coding RNAs then this isn't really an issue. The other problem with this approach is related to the fact that RTs are not highly processive polymerases and can prematurely terminate. This phenomenon can lead to biased enrichment of 3′-ends relative to 5′-ends, which can interfere with downstream quantification and analysis.

Considerations before using oligo-dT for 1st strand priming

- Oligo-dT priming is not compatible with RNA that has been fragmented since only the 3′-terminal fragments would be recovered. If using oligo-dT for 1st strand priming then fragmentation should be performed on the double stranded cDNA.

- Oligo-dT priming cannot be used with bacterial mRNAs since they do not possess a poly-A tail.

3.2.2 First-strand priming using random oligos

Copy this link to clipboard

First-strand synthesis can also be primed using primers with random sequences. This is probably the most commonly used technique for first-strand synthesis for RNA-seq. This approach has several advantages. The first is that non-polyadenylated RNAs will be recovered, making it possible to recover ncRNAs and use RNA fragmentation. Secondly, since random primers can anneal throughout the length of the RNA the 3′-bias that is observed with oligo-dT priming is eliminated resulting in a more uniform transcript coverage. Several studies have demonstrated, however, that random priming is not random, and instead priming hot-spots are commonly observed (see for instance: Roberts et al. 2011 and Hansen 2010).

3.2.3 First-strand priming using a pre-ligated oligo

Copy this link to clipboard

Another option for first-strand synthesis is to first ligate an adapter with a known sequence to the 3′-end of the RNA using T4-RNA ligase. This sequence can subsequently be used to prime synthesis of the first strand. This has the advantage of reducing priming bias since all RNAs are primed using the same sequence, and, as discussed below, can be used to retain strand-specific information. This approach is used in both the Small RNA kit from Illumina and in the SOLiD RNA kits from Life Technologies.

3.3 Second-strand synthesis

Copy this link to clipboard

The second cDNA strand is synthesized by a DNA polymerase using the RT-synthesized DNA-strand as a template.

Second-strand synthesis also requires a primer that is annealed to the first strand, and again several options exist. These include:

- Synthesis by RNA nicking and displacement.

- Using an oligo that is complementary to an adapter pre-ligated to the 5′-end of the RNA template.

- Using a primer containing a 3′-oligo-dG (this method, referred to as SMART (Zhu et al. 2001) takes advantage of the phenomenon that the MMLV reverse-transcriptase leaves a terminal non-template poly-dC 3′-overhang).

More on second-strand synthesis

3.3.1 Second-strand synthesis by RNA displacement

Copy this link to clipboard

This is the oldest and one of the most commonly used procedures. It relies upon using a mix of E. coli DNA polymerase I, E. coli RNase H, and T4 DNA ligase. Like other polymerases, E. coli DNA polymerase I requires a double-stranded primer with a 3′-OH to initiate synthesis. In this reaction RNase H is used to nick the original RNA template. The resulting RNA fragments can then function as primers to initiate synthesis off of the first-strand cDNA. During synthesis E. coli DNA polymerase I uses its 5′→3′ exonuclease activity to degrade on-coming RNA. Finally T4 DNA ligase repairs nicks that are left from the initial priming.

This reaction has been well characterized and optimized, and is highly efficient. The primary drawback to this method is that the region corresponding to the 5′-terminal RNA is lost. This has little effect on gene expression studies but can be a problem for using RNA-seq to identify transcription start sites.

3.3.2 Second-strand synthesis using an oligo ligated to the 5′-end of the RNA template

Copy this link to clipboard

Several methods take advantage of pre-ligating an adapter to the 5′-end of the RNA template before the first-strand synthesis reaction, resulting in synthesis of a first-strand cDNA with a known sequence at the 3′-end. Oligos that are complementary to this adapter can then be used to prime second-strand synthesis. This technique allows one to recover intact 5′-ends of the template RNA, and is used in both the Small RNA kit from Illumina and in the SOLiD RNA kits from Life Technologies.

3.3.3 Second-strand priming using oligo-dG and template switching (SMART)

Copy this link to clipboard

When MMLV RT reaches the end of the RNA template it tends to add several non-template dC nucleotides. This phenomenon has been exploited for the creation of cDNA libraries and it is the basis of Clontech's SMART RNA-seq system (Zhu et al. 2001). In this approach an oligo containing 3′-oligo-dG (referred to as the SMART oligo) is added to the first-strand synthesis reaction. The SMART oligo can hybridize to the terminal oligo-dC created by MMLV. This creates a new initiation site for another MMLV, which then uses the SMART oligo to extend synthesis of the first strand across the SMART oligo, which effectively appends the complementary sequence to the 3′-end of the first strand. The RNA template is then degraded using RNase H leaving the SMART oligo to prime synthesis of the second strand.

This method has the advantage that it successfully captures the terminal 5′-end of the mRNA, and, if used with oligo-dT for first-strand priming off of the poly-A tail, can theoretically capture entire transcripts.

3.4 Optional: Fragmentation of cDNA

Copy this link to clipboard

Currently most RNA-seq cDNA libraries are constructed using RNA that has been fragmented as the initial template. However, there are situations where it is preferable to construct cDNA libraries using intact (i.e. unfragmented) RNA.

More on fragmentation of cDNA

Examples where this would be the case include using oligo-dT to prime 1st strand synthesis, or where the goal is to sequence full-length RNA transcripts. In these situations it is necessary to fragment the double stranded cDNA before proceeding to the next step in the preparation of sequencing libraries. cDNA can be sheared using either mechanical (e.g. nebulization or sonication) or enzymatic methods. We cannot make recommendations regarding which method is preferable since we are not currently aware of a systematic comparison. Regardless of which method is used it is important that the shearing is random and produces a fairly tight, symmetrical size distribution (typically 200-300bp) depending on the experimental goals.

If you are starting with fragmented RNA it is not necessary to fragment your cDNA.

3.5 Sequencing adapters

Copy this link to clipboard

To sequence the cDNAs, specific adapter sequences must be present at the ends of the fragments. The roles and composition of the adapter sequences vary depending upon the sequencing platform. Adapters contain several different functional elements that are needed for sequencing and may contain one or more optional elements.

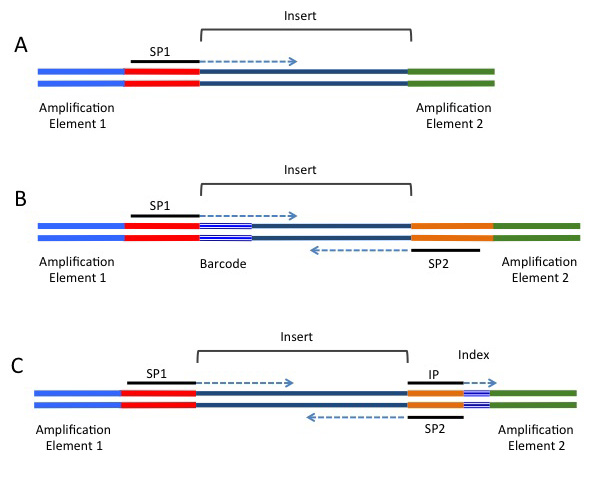

Most of the current sequencing platforms use clonal amplification to create clusters of identical molecules that are tethered next to each other on a solid support. For the Illumina platform the clusters are attached to the surface of a flow-cell, while for the 454, IonTorrent, and SOLiD platforms the clusters are generated on beads using emulsion PCR. Regardless of the platform, two types of sequence elements are required: (1) Terminal platform-dependent sequences that are required for clonal amplification and attachment to the sequencing support. (2) Sequences for priming the sequencing reaction. In addition several optional elements may be present. These include sequence tags to allow for multiplexing (known as barcodes or indices), and a second sequence-priming site to allow for sequencing of the insert from the other side (known as paired-end sequencing). Figure 3.2 and Table 3.1 provide additional information regarding these adapter elements.

Figure 3.2

Table 3.1 List of functional elements contained in sequencing adapters.

| Adapter element | Requirement | Location | Function |

| Amplification element | Required | 5′ and 3′ terminus | Clonal amplification of the construct |

| Primary sequencing priming site | Required | Adjacent to the insert | Initiating the primary sequencing reaction |

| Barcode/Index | Optional | 5′-end of the insert/Between the sequencing priming site and its respective amplification element | Provides a unique label to sequences from different samples. Allows pooling of multiple experiments in a single sequencing reaction. |

| Paired-end sequencing priming site | Optional | Adjacent to the insert on the side opposite of the primary sequencing priming site | Sequencing into the insert on the end opposite of the primary read |

| Index sequencing priming site | Optional | Complementary to the 5′-end of the sequencing priming site | Sequencing of the index |

3.5.1 Multiplexing samples with barcodes/indicies

Copy this link to clipboard

Most high-throughput sequencers produce many millions of sequence reads in a single reaction. In some cases the number of reads that can be obtained can exceed the needs of the experiment. Considering time and expense it is often desirable to pool ("multiplex") libraries from multiple experiments into a single sequencing reaction. To identify which experiment a given sequence comes from, each library is prepared using adapters containing different tags (commonly known as an index or barcode). The tags are typically short (5-7 bp) sequences that are read during sequencing. The sequence of the insert and tag can then be associated, allowing one to identify which sample the insert came from.

More on barcodes and indicies

Two kinds of sequence tags are commonly used.

Barcodes: The first (typically known as a barcode, or "inline barcode") is located in between the sequence priming site and the insert (see Figure 3.2). Sequence reads obtained using this configuration therefore contain the barcode followed by the insert. User created scripts or various available software packages can be used to sort the sequence reads by their barcode.

With some platforms problems can arise if one uses few or very similar barcodes. Be sure to consult with your sequence provider before attempting to use this multiplexing approach.

Indices: The Illumina sequencing platform also supports a second type of tag (typically known as an index, or "inline index"). The index is located within the 3′-adapter and is read using a separate sequencing reaction that follows the primary sequencing reaction. The Illumina sequencing software (and/or many independent read processing programs) then makes the association between the read and the individual library from which it came.

3.5.2 Paired-end sequencing

Copy this link to clipboard

Most current techniques are only capable of producing accurate sequence reads of 50 - 300 bases which is often less than the length of the insert. In order to increase the sequence coverage of inserts most platforms allow inserts to be sequenced from both ends. This technique (known as paired-end sequencing) can be used to increase the mapping accuracy and provides information that is useful for isoform detection. In order to use paired-end sequencing the adapters must contain a sequencing priming site that is situated on the opposite side of the insert (see Figure 3.2).

This option is supported by most of the current sequencing platforms but be sure to consult with your sequencing provider before designing experiments that utilize paired-end reads.

3.6 Addition of adapters

Copy this link to clipboard

As discussed above, platform specific sequences must be present at the end of the fragments before they can be sequenced (as shown in Figure 3.2). Several strategies have been developed for adding adapter sequences to create the asymmetrical molecule.

More on addition of adapters

3.6.1 Addition of adapters via Y-adapter PCR

Copy this link to clipboard

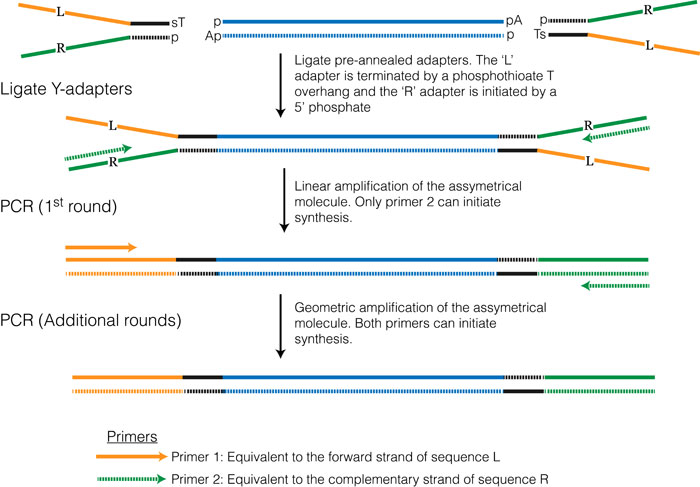

This method is one of the most commonly used and is essentially the same method that was developed for high-throughput sequencing of genomic DNA. Once the RNA is converted to double stranded cDNA the ends are blunted and adenosine overhangs are added. The adapter sequences can be added using a technique commonly referred to as Y-adapter PCR (see Figure 3.3).

Figure 3.3

3.6.2 Addition of adapters via RT/PCR

Copy this link to clipboard

Specific sequences can be added to each end of the insert during the first- and second-strand synthesis steps. In this case the reverse transcriptase primer can contain an overhanging sequence that doesn't anneal to the RNA template but contains at least a portion of the adapter sequences corresponding to those shown on the right in Figure 3.2. In a similar manner the forward PCR primer can contain over-hanging sequences corresponding to those shown on the left in Figure 3.2. Two kits (SMARTer and ScriptSeq) use this approach, and both employ proprietary chemistries and primer sequences.

3.6.3 Addition of adapters via ligation

Copy this link to clipboard

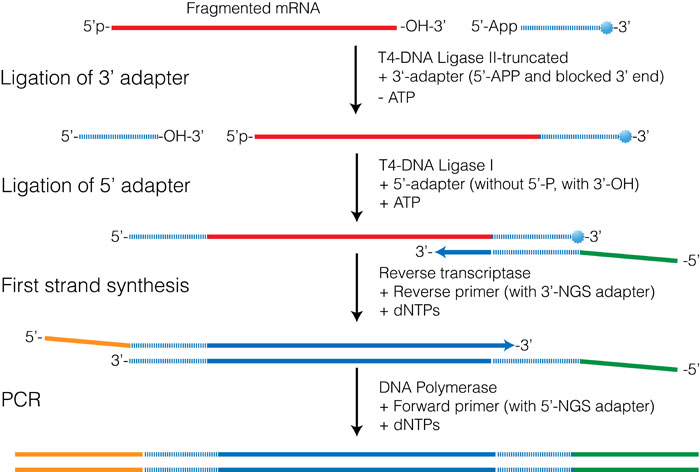

Although the technical details differ, this approach is used in the Illumina TruSeq Small RNA kit, the NEBNext Small RNA prep kit, and in the SOLiD RNA kits from Life Technologies. These kits use ligation procedures that allow two different adapters to be ligated onto each end of the target RNA. These adapters are then used to prime the first- and second-strand synthesis reactions resulting in cDNAs terminated by the appropriate adapter sequences.

Figure 3.4 shows an outline of the procedure used in both the Illumina TruSeq and in the NEBNext small RNA prep kits. The first step involves ligating an oligo to the 3′-OH of the RNA and takes advantage of the enzymatic activity of T4 RNA ligase II-truncated (T4 Rnl2-trunc) which is a modified form of T4 RNA ligase II. This enzyme can specifically ligate an oligo possessing a 5′-pre-adenylation group to RNA with a 3′-OH. Unlike the full-length ligase, T4 Rnl2-trunc is unable to adenylate the 5′-end of the RNA, preventing circularization of the RNA molecule.

The 5′-adapter is then admixed with T4 RNA ligase and ATP, all of which promotes ligation of the 5′-adapter onto the 5′-end of the RNA. One of the biggest challenges with this procedure is the formation of 5′-adapter-3′-adapter products that can form during the second reaction. In the NEBNext Small RNA prep kit this reaction is quenched by the addition of the RT-primer before the second ligation step. The RT-primer is a DNA oligo that contains sequence complementary to the 5′-end of the 3′-adapter oligo. Free 3′-adapters therefore form a double-stranded molecule that is unable to act as a substrate for the ligation reaction.

Figure 3.4

3.7 Preparation of stranded libraries

Copy this link to clipboard

Conventional RNA-seq library protocols involve converting the RNA to double-stranded cDNA followed by the ligation of sequencing adapters. Although Y-adapter PCR results in an asymmetrical molecule, in reality the final libraries contain two populations of molecules with respect to the original RNA template. In one population the strand that is sequenced corresponds to the sense strand while in the other the strand that is sequenced corresponds to the anti-sense strand. Thus the 'strandedness' of the original RNA template is lost.

Why does this matter? Knowledge of which strand a read comes from may not be critical for measuring differential gene expression since most read aligners (see "Aligning RNA-seq reads to a reference") have settings that allow them to evaluate both the initial read and its reverse-complement. However, for genomes in which transcribed regions overlap (not most eukaryotic organisms), knowledge of strandedness may help assign reads to genes adjacent to one another but on opposite strands. In addition, if one is primarily interested in annotation strandedness can be used to discover features such as the existence of overlapping anti-sense transcripts.

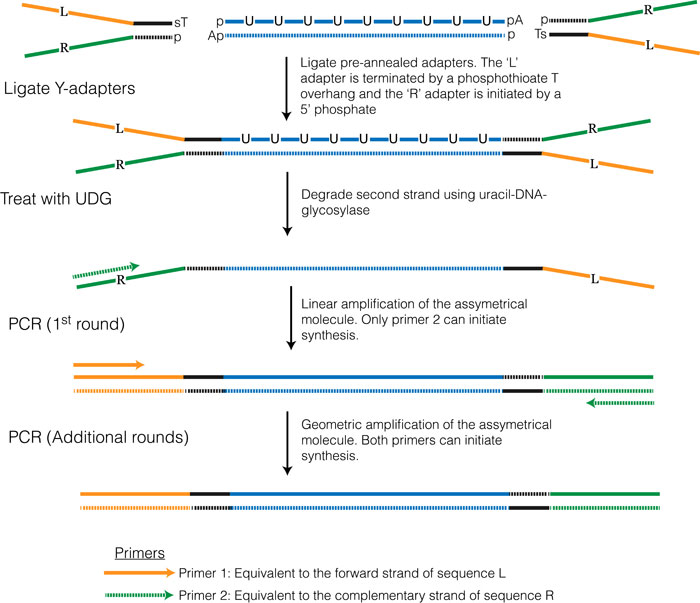

More on stranded protocols

Various 'strand-specific' library preparation protocols have been developed and several of these were reviewed in Levin et.al. 2009. The method that has become the most popular (and performed well according to Levin et.al. 2009) takes advantage of the fact that E. coli DNA polymerase can synthesize DNA using either dUTP or dTTP. In this protocol dUTP is used for synthesis of the second strand, which essentially marks this strand. Y-adapters are then ligated onto both ends in the same manner as in the non-strand-specific protocol. Two approaches have been developed to selectively amplify the non-dUTP strand. One approach (see Figure 3.5 below and outlined in Borodina et al. 2011) involves adding uracil-DNA-glycosylase (UDG) which cleaves the second strand where dUTP has been incorporated, effectively preventing this strand from being amplified during the subsequent PCR amplification. The second approach (which is currently used in the Illumina TruSeq Stranded RNA-seq library preparation kit) involves using a DNA polymerase that is incapable of extending past dUTP bases.

Figure 3.5

3.8 Validation and Quantification

Copy this link to clipboard

To achieve the highest quality of data, it is important to validate the quality and accurately quantify the cDNA libraries before sequencing. As discussed in the RNA preparation section, each of the commonly used methods has certain drawbacks. Absorbance-based methods can lead to erroneous measurements due to residual primers and dNTPs and may not be sensitive enough to accurately measure the library concentrations if the library yield is low. Fluorescence-based techniques generally are more sensitive to RNA concentration but less sensitive to contaminants. If multiplexing multiple libraries in a single sequencing reaction, make sure to adjust the relative contributions from each library to suit your experimental design needs. Other robust options for quantification include quantitative PCR (qPCR) and estimation of fragment concentration using electrophoretic instruments like the ABI Bioanalyzer.

Assessing the fragment size distribution of final RNA-seq libraries is also important. The sizes of the molecules should fall into the expected size range (depending on protocol), and the libraries should be free of ligation/PCR artifacts (usually small relative to the desired molecules). Fragment sizes can be evaluated via electrophoresis, preferably using a sensitive instrument such as an ABI Bioanalyzer.

4. Sequencing

Copy this link to clipboard

-

Design ExperimentSet up the experiment to address your questions.

-

RNA PreparationIsolate and purify input RNA.

-

Prepare LibrariesConvert the RNA to cDNA; add sequencing adapters.

-

SequenceSequence cDNAs using a sequencing platform.

-

AnalysisAnalyze the resulting short-read sequences.

4.1 Overview

Copy this link to clipboard

Sequencing technologies are rapidly expanding and new sequencing chemistries are being developed at a rapid pace. A number of sequencing platforms are widely available, including Illumina, Ion Torrent, and PacBio. The first nanopore sequencing devices are now being marketed by Oxford Nanopore while several legacy technologies can still be found, including Life’s 454 and SOLiD. Each is based upon different proprietary chemistries and technologies and each has unique strengths and weaknesses. A good overview of several platforms is provided by Metzker (2009).

4.2 Choosing a sequencing platform

Copy this link to clipboard

One of the first steps that must be done when designing an RNA-seq experiment is choosing a sequencing platform. It is critical to understand that short-read sequencing requires the addition of specific adapter sequences to the ends of the cDNA molecules of interest. (This is covered in more detail under "Library Preparation"). Since each platform uses unique proprietary adapters the correct adapters must be added to match the sequencing platform.

Although many sequencing platforms exist some are better suited for RNA-seq than others. The current leading platform for RNA-seq is Illumina. This platform enables deep sequencing which is generally important for RNA-seq, and provides long enough, low-error reads that are suitable for mapping to reference genomes and transcriptome assembly. For detailed comparisons of the available platforms see Liu et al. (2012) and Glenn (2011). A useful online comparison can be found at bluseq. The PacBio platform is also beginning to be used for transcriptome assembly, although its use is still in the early stages. Each read from a PacBio is often long enough to recapitulate a full length cDNA transcript.

4.3 Sample preparation and submission

Copy this link to clipboard

Before initiating an RNA-seq experiment users should first consult with the sequencing facility they intend to use to make sure they understand the sample preparation and submission requirements. Some general issues that need to be considered are:

- That the samples are clean and free of major contaminants.

- The primary DNA molecules contain inserts of the correct size.

- The primary DNA molecules have adapters on each end.

- The sample concentration is appropriate.

- The samples are suspended in appropriate buffers.

Furthermore, the facility needs to know:

- The sequence of the sequence-priming site.

- The length of the read you desire.

- Whether you want single-end or paired-end reads.

- Whether there is a barcode or index sequence.

- If using Illumina sequencing the facility also needs to be notified if the inserts contain a region of low sequence complexity immediately after the sequence-priming site (i.e. a barcode).

5. Analysis

Copy this link to clipboard

-

Design ExperimentSet up the experiment to address your questions.

-

RNA PreparationIsolate and purify input RNA.

-

Prepare LibrariesConvert the RNA to cDNA; add sequencing adapters.

-

SequenceSequence cDNAs using a sequencing platform.

-

AnalysisAnalyze the resulting short-read sequences.

5.1 Overview

Copy this link to clipboard

The analysis and interpretation of genomic data generated by sequencing are arguably among the most complex problems modern scientists face. A staggering degree of effort by biologists, computer scientists, mathematicians, and statisticians is currently leveled at curating, manipulating, and interpreting this information. RNA-seq is subject to many of the same issues other sequencing applications face, in addition to some nuances. Please keep in mind that the nature of questions people may address using RNA-seq data is effectively limitless, so there are even more facets to the analysis of transcriptomes than there are to generating the data itself. As a disclaimer, we emphasize that it is virtually impossible to stay abreast of all developments in approaches to analyzing RNA-seq data. Our objective rather is to provide in the following outline a rough guide to commonly encountered steps and questions one faces on the path from raw RNA-seq data to biological conclusion.

To generalize, however, embarking on an RNA-seq study usually requires initial filtering of sequencing reads, assembling those reads into transcripts or aligning them to reference sequences, annotating the putative transcripts, quantifying the number of reads per transcript, and statistical comparison of transcript abundance across samples or treatments.

Stereotypical RNA-seq Analysis Pipeline

- Demultiplex, filter, and trim sequencing reads.

- Normalize sequencing reads (if performing de novo assembly)

- de novo assembly of transcripts (if a reference genome is not available)

- Map (align) sequencing reads to reference genome or transcriptome

- Annotate transcripts assembled or to which reads have been mapped

- Count mapped reads to estimate transcript abundance

- Perform statistical analysis to identify differential expression (or differential splicing) among samples or treatments

- Perform multivariate statistical analysis/visualization to assess transcriptome-wide differences among samples